Everyone’s chasing LLM rankings that don’t exist. Large language models don’t sort content by authority or domain score — they learn associations from training data. The brands that show up in AI-generated answers aren’t winning an algorithm. They’re deeply woven into the conversations that matter. If your content strategy is built on search engine logic, you’re optimizing for the wrong machine. Here’s what actually drives AI visibility.

LLM SEO Is Built on a False Premise

Here’s What Actually Matters

The SEO industry loves a new playing field. And right now, everyone is racing to figure out how to ‘rank’ in AI-generated answers. Agencies are publishing guides. Consultants are selling audits. The term ‘LLM SEO’ is everywhere.

There’s just one problem: the entire framing is wrong.

LLMs don’t rank content the way search engines do. They don’t have a list. There’s no algorithm secretly sorting your blog posts by authority score. The advice being sold right now is mostly search engine logic dressed up in AI language — and if you follow it, you’re optimizing for a system that doesn’t actually exist.

This article breaks down how LLMs actually work, what they genuinely retain from training data, and what that means for how you should think about visibility in an AI-first world.

1. The Myth of LLM Rankings

When a new technology arrives, people instinctively map it onto something familiar. Search engines arrived, so we built SEO. Social platforms emerged, so we built a social media marketing strategy. Now that LLMs are everywhere, the industry is naturally trying to build ‘LLM SEO.’

The problem is that LLMs and search engines are fundamentally different machines. A search engine crawls the web in near real time, indexes pages, scores them across hundreds of signals — backlinks, freshness, authority, relevance — and returns a ranked list. The whole system is built around retrieval and ranking.

An LLM doesn’t do any of that. It learned from a massive text snapshot during training. It compressed patterns, associations, and relationships from that data into billions of numerical weights. It doesn’t store a ranked list of sources. It doesn’t remember URLs, bylines, or publication names in the way a search index does.

There is no ranking inside a large language model. The concept simply doesn’t apply.

Yet the industry keeps publishing content about ‘how to rank in ChatGPT’ or ‘LLM ranking factors.’ It’s not that the people writing this are dishonest — it’s that they’re pattern-matching to a familiar model when the technology actually requires a new one.

2. How LLMs Actually Process Information During Training

To understand what you’re really optimizing for, you need a basic grasp of how LLMs learn.

Training an LLM involves exposing it to enormous amounts of text — web pages, books, articles, forums, documentation, and more. The model reads this text and learns to predict the next word or phrase in a sequence. Do this billions of times across trillions of tokens, and the model begins to develop something that looks a lot like understanding.

But here’s the critical detail: the model doesn’t bookmark the sources. It doesn’t create a database of ‘Article A said X’ and ‘Brand B said Y.’ What it builds is a web of associations — which words, concepts, and entities tend to appear near each other, how often, and in what contexts.

Think of it less like a library catalog and more like a mind that has read everything and remembers the ideas, not the page numbers.

This means that after training, if you ask the model about cybersecurity threats, it draws on a distributed sense of which brands, researchers, frameworks, and concepts are consistently linked to that topic — not on a ranked list of authoritative domains.

The implication is significant: what matters is whether your brand, name, or concept is woven into the fabric of relevant conversations across the training corpus. Not whether your site has a high authority score.

3. Why the ‘Domain Authority’ Playbook Fails Here

In traditional SEO, domain authority is a meaningful proxy. Sites with more quality backlinks tend to rank better. Google uses link signals as a form of peer review — if many credible sites link to you, you’re probably credible too.

Marketers have naturally tried to carry this thinking into LLM visibility. The assumption is: if your site has high authority, AI models will ‘trust’ it more and surface your content in answers.

This is where the logic breaks down.

LLMs don’t read your domain authority score. They don’t know what Moz or Ahrefs says about your site. During training, they processed raw text — and while high-authority sites likely appeared more frequently in training data (because they’re linked to more and thus crawled more), that’s an indirect correlation, not a direct mechanism.

More importantly, if a concept, brand, or perspective is strongly associated with the training data, regardless of where it appears, the model will carry that association forward. A scrappy independent researcher who writes definitive content on a topic that gets widely discussed and referenced will have more LLM presence than a large site that rarely gets cited in context.

The variables that actually matter are quite different from traditional SEO metrics:

- How frequently does your brand or content appear in the training corpus?

- In what contexts does it appear — and are those contexts relevant to your target topics?

- Are other sources referencing, discussing, or building on your ideas?

- Is the language around your brand consistently associated with specific areas of expertise?

These are questions about presence and association, not authority scores.

4. Co-occurrence: The Signal That Actually Sticks

If domain authority is the wrong signal to optimize for, what’s the right one? The honest answer is co-occurrence — the pattern of which concepts and entities appear together, how often, and in what framing.

When an LLM encounters the word ‘cybersecurity’ repeatedly alongside certain brand names, it develops an implicit association between that brand and cybersecurity expertise. When ‘content strategy’ regularly appears alongside a specific author’s name across multiple publications, the model begins to associate that person with that domain.

This isn’t ranking. It’s an association. And it’s built up over time through consistent, contextual presence in places where your topic is genuinely discussed.

The question isn’t ‘how do I rank for this keyword in an LLM?’ The question is: ‘when this topic comes up anywhere on the internet, is my brand part of that conversation?’

This reframing matters because it changes where you invest. Instead of chasing metrics that don’t transfer, you’re focused on:

- Being genuinely useful in your space, so others naturally reference you

- Appearing consistently in the places where your audience discusses problems you solve

- Creating content that gets quoted, cited, embedded, and discussed — not just found

- Building a reputation dense enough that your name becomes part of the vocabulary around your topic

Co-occurrence isn’t a tactic. It’s what happens when you’re actually the best at what you do, and the internet reflects that over time.

5. What Happens When LLMs Go Live: Runtime vs. Training

There’s an important wrinkle here that changes the picture a little: modern LLMs don’t always rely solely on training data.

When you ask ChatGPT or another AI assistant a question about recent events, it often does something under the hood: it fans out queries to a search engine, pulls back fresh results, and uses that information alongside its trained knowledge to formulate a response. This is sometimes called retrieval-augmented generation, or RAG.

This is where traditional SEO signals do come back into play — but only for the retrieval layer. If an LLM is pulling live web results to answer a question, the pages it retrieves will be influenced by search engine rankings. Your technical SEO, your backlinks, your structured data — these still matter for getting into that retrieved context window.

But this is a different mechanism from training influence, and conflating the two is where most LLM SEO advice goes wrong.

Here’s a simple way to think about it:

- Training influence: Built over months or years. Determined by how present and contextually relevant your content was across the web before the model’s knowledge cutoff. No direct lever to pull.

- Runtime retrieval: Happens in real time when the model fetches fresh content. Influenced by search engine rankings, page quality, and structured content that LLMs can parse easily.

A complete strategy addresses both — but it treats them as separate problems rather than lumping them into a single ‘LLM ranking’ framework.

6. What You Should Actually Be Optimizing For

So if the old playbook doesn’t apply cleanly, what does a grounded, effective strategy look like? Here’s a practical way to think about it.

For training influence — play the long game.

You can’t inject yourself into an LLM’s weights after it’s been trained. What you can do is ensure that by the time the next generation of models trains on fresh data, your brand is well-represented in the right contexts.

- Write content that gets genuinely referenced — cited in articles, linked in newsletters, and discussed in communities.

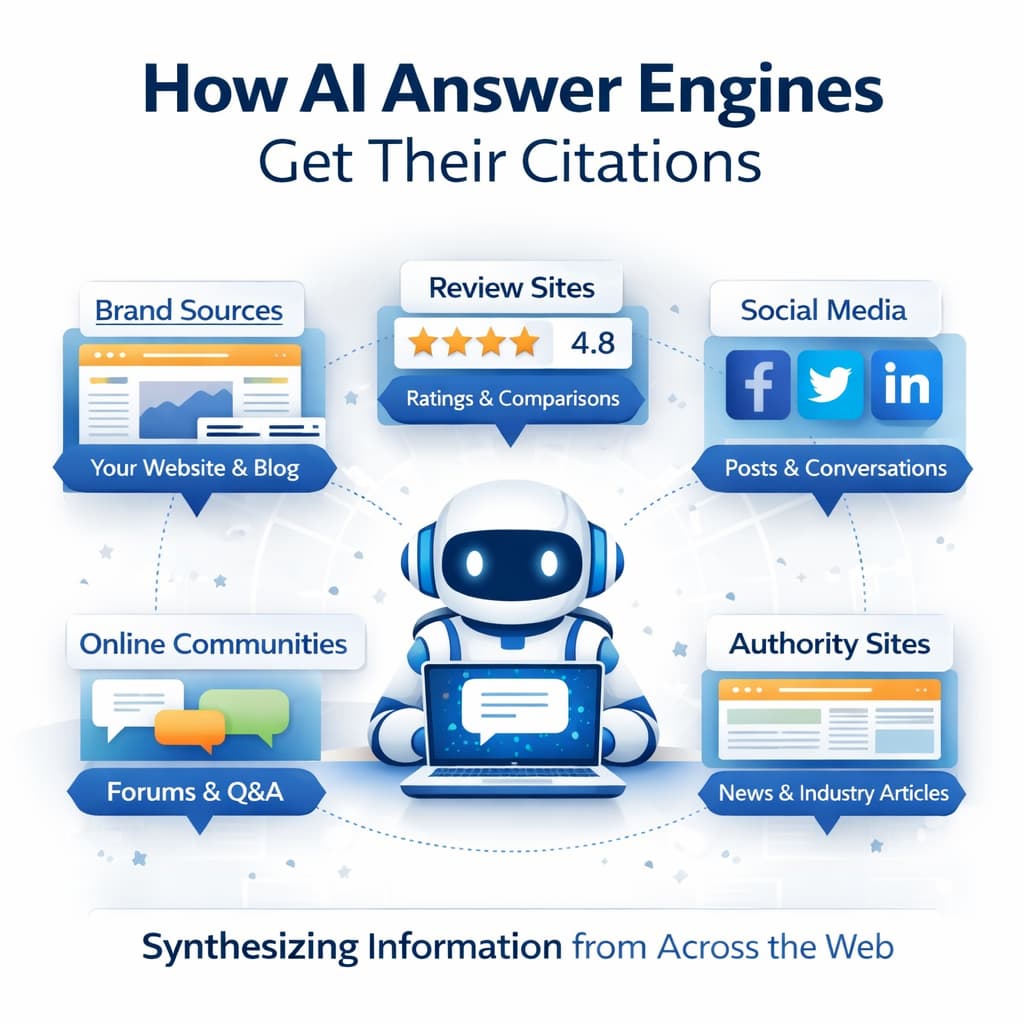

- Pursue mentions in high-traffic, high-text-density environments: industry publications, podcasts with transcripts, Wikipedia-adjacent sources, and Q&A platforms.

- Use consistent language around your areas of expertise so that associations build up clearly over time.

- Prioritize being helpful in conversations you don’t control — on forums, Talking City, Reddit, LinkedIn, and in expert discussions.

For runtime retrieval — apply smart, modern SEO.

When LLMs pull live results, they need content they can actually parse and use. This means:

- Clear, structured content that directly answers questions — not content padded for word count.

- Well-organized headers, concise definitions, and factual precision.

- Schema markup and technical hygiene that make your content machine-readable.

- Fast, accessible pages that search engines (and by extension, LLM retrieval layers) can actually index.

For brand association — think in ecosystems, not articles.

The question isn’t ‘did I write a good blog post?’ Is my brand recognizable as associated with this topic across the web?’ That requires showing up in multiple formats, on multiple platforms, in multiple voices — not just your own.

7. A New Mental Model for AI Visibility

The biggest shift required here is conceptual. Search engine optimization is, at its core, about working within a ranking system — understanding its signals and optimizing for them. That mental model is useful, but it doesn’t transfer cleanly to a world where the primary output mechanism isn’t a ranked list but a generated response.

AI visibility is about something closer to reputation management at scale. The question is: how thoroughly and accurately does the AI’s knowledge of your brand, expertise, and perspective reflect reality?

That’s a function of:

- Volume — how much credible text about you or your ideas exists

- Context — whether that text connects you to the right topics and problems

- Consistency — whether the associations built up over time are coherent and reinforcing

- Quality of association — whether the content linking you to your expertise area is substantive, not superficial

None of this is new. It’s essentially what good PR, thought leadership, and content strategy have always tried to achieve. The difference is that now the audience includes AI models that will synthesize and reproduce what they’ve learned — and the better your signal in that training data, the more accurately and frequently you’ll appear in AI-generated responses.

Stop trying to reverse-engineer the algorithm. There isn’t one to reverse-engineer. Start building the kind of presence that makes you the obvious association when your topic comes up — anywhere, anytime, in any format.

That’s not a loophole. It’s just being genuinely good at what you do, and making sure the internet knows it.

Frequently Asked Questions

Can you do SEO for LLMs?

Not in the traditional sense. LLMs don’t rank content like search engines. You can influence AI visibility by building strong topic associations in training data and maintaining good technical SEO for live retrieval layers.

What is co-occurrence in the context of LLMs?

Co-occurrence refers to how often your brand, name, or concepts appear alongside relevant topics across the web. LLMs carry these associations forward from training data — the more consistent and contextual your presence, the stronger the association.

Does domain authority affect LLM responses?

Not directly. LLMs don’t read authority scores. High-authority sites may appear more in training data, but what matters most is contextual co-occurrence — being consistently linked to your expertise topics across credible sources.

What is retrieval-augmented generation, and why does it matter?

RAG is when an LLM fetches live search results at runtime to supplement its training knowledge. For this layer, traditional SEO signals do matter — clear, structured, well-indexed content improves your chances of being retrieved.

How do I improve my brand’s visibility in AI-generated answers?

Focus on earning genuine references in industry content, being cited across multiple platforms, using consistent language around your expertise, and producing clear, structured content that LLMs can parse and use in real-time retrieval.

0

Leave a Comment