AI recommendation poisoning is silently corrupting the systems that shape what you buy, watch, and believe. From fake reviews to prompt injection, attackers are exploiting every weakness in modern AI. This comprehensive guide breaks down how these attacks work, who’s most vulnerable, and the proven defense strategies your organization needs to stay protected.

Protecting AI Systems from Recommendation Poisoning: A Complete Guide

Table of Contents

- Introduction to AI Recommendation Poisoning

- Understanding AI Recommendation Systems

- The Anatomy of an AI Recommendation Poisoning Attack

- Types of Poisoning Attacks

- Real-World Examples and Case Studies

- Who Is at Risk

- Detection Strategies

- Defense and Mitigation Strategies

- Ethical and Societal Implications

- AI Recommendation Poisoning in the Age of LLMs and Agentic AI

- Building a Poisoning-Resilient AI Culture

- Key Takeaways and Recommendations

- Frequently Asked Questions (FAQ)

- Glossary of Key Terms

- Further Reading and Resources

AI Recommendation Poisoning: A Comprehensive Guide

1. Introduction to AI Recommendation Poisoning

1.1 What Is AI Recommendation Poisoning?

AI Recommendation Poisoning is a cyberattack strategy in which bad actors deliberately corrupt the data, signals, or feedback that power AI recommendation systems, pushing those systems to produce biased, misleading, or harmful outputs. Think of it as hacking not the software, but the intelligence behind it.

Unlike traditional hacks that break into servers, poisoning attacks manipulate what the AI learns. This makes them far more subtle and harder to catch. By the time the damage shows up in results, the source is already buried deep in training history or user interaction logs.

1.2 Why It Matters in Today’s AI Landscape

AI Recommendation engines now shape what billions of people buy, watch, read, and believe. When these systems get poisoned, the consequences range from a brand losing market position to public opinion being quietly steered at scale. The attack surface has never been larger.

With AI woven into healthcare diagnostics, financial advising, and news delivery, the stakes of recommendation poisoning go well beyond inconvenience. A corrupted model in the wrong domain can result in serious financial, medical, or social harm. This is no longer a niche security concern; it’s a mainstream risk.

1.3 Scope and Audience of This Guide

This guide is written for developers building AI-powered products, security professionals tasked with protecting them, and product managers who need to understand the risks in plain language. It covers how poisoning works, where it happens, and what you can do about it.

Whether you’re building a recommendation feature for the first time or auditing an existing one, this guide gives you the vocabulary, context, and practical tools to take AI recommendation security seriously from day one — not as an afterthought.

2. Understanding AI Recommendation Systems

2.1 How Recommendation Engines Work

A recommendation engine learns patterns from data — what users click, purchase, skip, or rate — and uses those patterns to predict what they’ll want next. The more data it ingests, the more confident its predictions become. That confidence is also its greatest vulnerability.

Most engines rely on user behavior signals like dwell time, clicks, purchases, and ratings. These signals feel objective, but they’re actually easy to fake. When an attacker controls even a small slice of those signals, they can nudge an entire model’s behavior in a targeted direction over time.

2.2 Types of Recommendation Systems

There are three main types: collaborative filtering (based on what similar users liked), content-based filtering (based on item attributes), and hybrid systems that blend both. Each has a different attack profile, so different poisoning strategies work better against each.

Collaborative filtering is particularly vulnerable because it relies heavily on community-wide behavior, making it easy to skew with fake accounts. Content-based systems are more resilient to crowd manipulation but can be fooled through metadata manipulation. Hybrids inherit risks from both worlds.

2.3 Key Vulnerabilities by Design

Every recommendation system has a feedback loop at its core: it shows content, measures reactions, and updates its model accordingly. This loop is efficient by design — but it means a poisoned signal gets reinforced over time rather than diluted. The system will confidently serve bad recommendations after enough manipulation.

Other structural weaknesses include cold-start problems (new users get easily influenced outputs), lack of diversity enforcement (popularity biases amplify poisoning), and opaque training pipelines where anomalous data points slip through without triggering alerts.

3. The Anatomy of an AI Recommendation Poisoning Attack

3.1 Attack Surface Overview

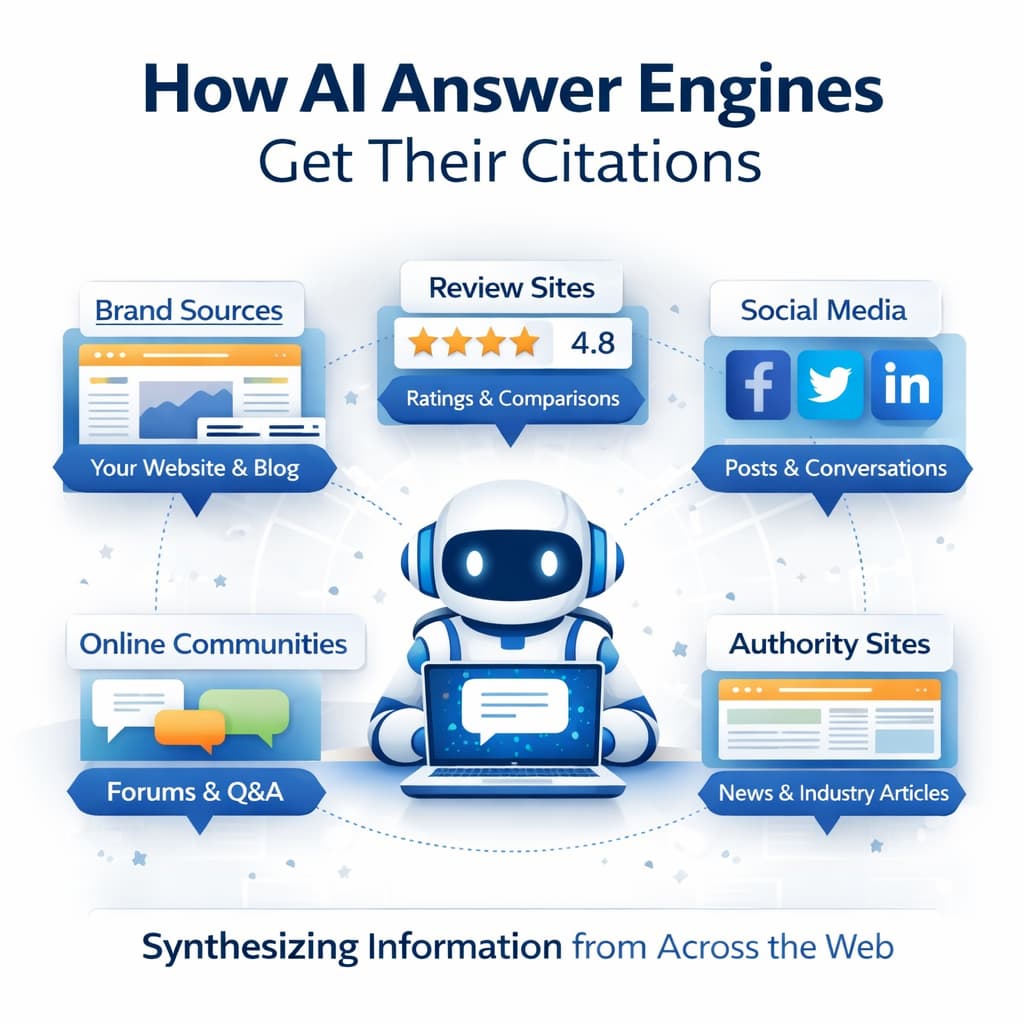

The attack surface of a recommendation system includes every point where data enters or influences the model: user behavior logs, item metadata, ratings and reviews, feedback APIs, and even the model’s retraining pipeline. Each one is a potential entry point for adversarial manipulation.

Attackers don’t need deep technical access to exploit these surfaces. Many poisoning attacks are conducted entirely through the front-end — fake accounts clicking, rating, and interacting with content in scripted patterns. The system sees normal-looking behavior; the impact builds invisibly beneath the surface.

3.2 The Attacker’s Goals and Motivations

Motivations vary widely. A competitor might want to bury a rival’s products in search rankings. A state actor might want to flood news feeds with specific narratives. A fraudster might want to push low-quality or counterfeit goods to the top of shopping results. The goal shapes the attack strategy.

In some cases, attackers aren’t after competitive advantage at all — they want to undermine trust in the AI system itself. Causing visible, embarrassing failures in a recommendation engine damages the platform’s reputation and erodes user confidence. For platforms that rely on trust as a core asset, this is a serious threat.

3.3 Attack Lifecycle: From Injection to Impact

A typical poisoning attack follows a pattern: preparation (creating accounts or access), injection (feeding manipulated data), incubation (waiting for the model to retrain and absorb the poisoned signals), and exploitation (observing the skewed outputs and benefiting from them).

The incubation phase is what makes these attacks so tricky to catch. There’s often a significant delay between when the data is poisoned and when the effect becomes visible. By the time anomalies show up in outputs, the cause is already buried under layers of legitimate-looking training history.

4. Types of Poisoning Attacks

4.1 Data Poisoning

Data poisoning involves injecting corrupted or misleading information directly into a model’s training dataset. This could be fabricated reviews, fake engagement signals, or altered item metadata. The model treats this data as ground truth and learns from it — with predictably bad results.

This is one of the most damaging attack types because it corrupts the model at its foundation. Cleaning it up often requires identifying and removing the poisoned data, retraining the model from scratch, and validating outputs—a costly, time-consuming process, especially at enterprise scale.

4.2 Feedback Loop Manipulation

Feedback loop manipulation exploits the iterative nature of recommendation systems. Attackers generate artificial engagement — clicks, views, purchases — that fool the model into thinking certain items or content are genuinely popular. The system amplifies these items, creating a self-reinforcing cycle.

This attack is particularly effective on platforms with real-time personalization, where the feedback loop runs continuously. Fake signals get absorbed almost immediately and can produce noticeable results within hours. Detecting it requires baselining normal engagement patterns and flagging deviations early.

4.3 Sybil Attacks

A Sybil attack floods the system with a large number of fake identities — bots or compromised accounts — all coordinating to influence the recommendation engine in a specific direction. Named after a famous psychology case study, Sybil attacks exploit the democratic nature of collaborative filtering.

Because collaborative filtering relies on the crowd, a sufficiently large fake crowd can override the real one. This is especially effective when the attacker can coordinate many accounts to behave in slightly different ways, avoiding the appearance of obvious bots — mimicking organic, varied human behavior at scale.

4.4 Adversarial Input Attacks

Adversarial input attacks craft specific inputs designed to confuse or mislead the recommendation model. These inputs often look perfectly normal to humans but contain subtle patterns that push the model toward unintended outputs, such as showing a specific product or hiding another from the results.

These attacks are particularly concerning for deep learning-based recommenders, which can be sensitive to small, structured perturbations in input data. Defending against them often requires adversarial training — exposing the model to attack examples during training so it learns to recognize and resist them.

4.5 Model Inversion and Extraction Attacks

Model inversion attacks leverage the system’s outputs to reconstruct private information about the training data — such as personal preferences or sensitive behavioral patterns. Model extraction attacks go further, using queries to reverse-engineer a copy of the proprietary model itself.

Both are serious threats to privacy and IP. An attacker who extracts your recommendation model can study its weaknesses at leisure, then craft highly targeted poisoning strategies. Defending against these requires output perturbation, query rate limiting, and monitoring for unusual patterns in API queries.

4.6 Prompt Injection in LLM-Based Recommenders

As recommendation systems increasingly rely on large language models to interpret user intent and generate personalized suggestions, a new attack vector has emerged: prompt injection. An attacker embeds malicious instructions into content the LLM reads—and the model follows them.

For example, a product description might contain hidden text that instructs the model to rank that product above all others. The LLM, treating the instruction as a legitimate context, complies. This attack is uniquely difficult to defend against because it exploits the model’s language comprehension, its greatest strength.

5. Real-World Examples and Case Studies

5.1 E-Commerce Product Ranking Manipulation

E-commerce platforms have long dealt with fake review farms that organize operations to generate thousands of positive reviews for specific products. These reviews feed directly into recommendation algorithms, inflating product rankings and sending those items to the top of search results and suggested lists.

Amazon, Alibaba, and other major marketplaces have invested heavily in detecting fake reviews. Despite this, the practice persists because it works. Even a temporary boost in rankings can generate enough real sales to permanently improve a product’s organic position — making the poisoning effect self-sustaining even after fake reviews are removed.

5.2 Social Media Feed Hijacking

Social media recommendation algorithms have been manipulated to artificially amplify certain content — political narratives, viral misinformation, or coordinated campaigns. This has been documented across multiple platforms in the context of elections, public health crises, and civil unrest.

Coordinated inauthentic behavior — networks of accounts designed to look real but acting in sync — is the primary mechanism. These networks don’t just spread content organically; they exploit the engagement signals that recommendation algorithms use to decide what goes viral, essentially teaching the algorithm to prioritize their content.

5.3 Search Engine Result Poisoning

Search engine recommendation and autocomplete systems have been manipulated through coordinated query volume, SEO spam, and targeted link farms. These attacks exploit the systems’ reliance on aggregate user behavior to determine what’s relevant and trustworthy.

Google’s autocomplete feature has historically surfaced offensive or misleading completions due to coordinated search behavior. When enough users search for the same damaging phrase, the system learns to suggest it. This makes the search engine itself a vehicle for spreading harmful associations — even unintentionally.

5.4 Streaming Platform Manipulation

Streaming platforms like Spotify and YouTube have faced manipulation from artists and labels using bot streams to inflate play counts and push content into algorithm-driven playlists. Spotify has removed hundreds of millions of fake streams; YouTube regularly purges artificially inflated view counts.

The challenge for these platforms is that the signals being manipulated — plays, watches, completions — are the same ones that drive discovery and revenue. Every defensive measure has to balance catching fraudulent engagement without suppressing genuine enthusiasm or incorrectly penalizing legitimate artists.

5.5 Financial and News Recommender Exploits

Financial recommendation systems have been targeted to push specific assets — most visibly in pump-and-dump schemes where social media sentiment is artificially manipulated to trigger algorithmic buy recommendations. The GameStop short squeeze exposed how interconnected social signals and financial algorithms had become.

News recommenders face similar threats. By flooding platforms with SEO-optimized but misleading articles and amplifying them through bot engagement, bad actors can get fabricated or slanted content into mainstream recommendation feeds — reaching real audiences who assume the algorithm has already filtered for quality and accuracy.

6. Who Is at Risk

6.1 E-Commerce Platforms

Any platform that recommends products based on user behavior is at risk. The competitive pressure in e-commerce creates strong financial incentives for manipulation — a few positions higher in search results can mean millions in additional revenue. Smaller platforms with less sophisticated detection are especially vulnerable.

6.2 Social Media and Content Networks

Social networks are high-value targets because their recommendation systems directly influence public opinion, news consumption, and cultural discourse. The scale of potential impact — billions of daily active users — makes them attractive to state actors, political operatives, and commercial manipulators alike.

6.3 Healthcare AI Systems

AI systems that recommend treatments, flag symptoms, or prioritize patient cases represent a uniquely high-stakes risk category. Poisoning these systems could delay diagnoses, push inappropriate treatments, or deprioritize critical cases. The consequences of manipulation here aren’t financial — they’re life and death.

6.4 Financial Advisory Platforms

Robo-advisors and AI-powered investment platforms rely on recommendation engines to suggest portfolios, trades, and financial products. These systems are targets for market manipulation, where artificially inflated sentiment signals can push algorithmic recommendations in directions that are profitable for the attacker.

6.5 News Aggregators and Media Outlets

Platforms that curate and surface news based on engagement signals are vulnerable to content farms and coordinated amplification campaigns. When low-quality or misleading content gets boosted by fake engagement, the algorithm treats it as popular and serves it to real audiences as if it were credible journalism.

6.6 Enterprise and SaaS AI Tools

Business tools that recommend vendors, prioritize tickets, suggest hires, or rank proposals are increasingly powered by AI. These systems may not seem like obvious targets, but a competitor or disgruntled insider could manipulate training signals to skew outputs in damaging ways — with serious operational consequences.

7. Detection Strategies

7.1 Anomaly Detection in User Behavior

The first line of defense is monitoring user behavior for patterns that don’t look human. Unusually high click-through rates, identical interaction sequences from multiple accounts, rapid-fire engagement, and impossible geographic activity are all red flags that a recommendation system is being manipulated.

Machine learning-based anomaly detection can flag these patterns in real time — but it requires good baseline data and continuous retraining. Attackers adapt, so detection systems need to evolve alongside the attack strategies they’re meant to catch. Static rule sets become obsolete quickly.

7.2 Statistical Monitoring of Model Outputs

Beyond user behavior, monitor the model’s outputs directly. Sudden shifts in what gets recommended, unexpected items climbing the rankings without a clear organic cause, or sharp changes in recommendation diversity are all signs that the underlying model may have absorbed poisoned signals.

Setting up automated alerts for these statistical shifts — and having a human review process when they trigger — is essential. The goal isn’t to catch every anomaly, but to catch meaningful drift early enough to investigate before the poisoned model does real damage to users or business outcomes.

7.3 Red-Teaming and Adversarial Testing

Red-teaming means deliberately trying to poison your own system to see how it responds. Security teams craft realistic attack scenarios — fake account networks, adversarial inputs, manipulated metadata — and measure how much it takes to skew the model’s outputs in a detectable way.

This kind of proactive testing reveals real weaknesses before real attackers find them. It also helps calibrate detection thresholds: if your red team can poison the model with a hundred fake accounts before any alert fires, your detection needs tuning. Red-teaming should be a regular part of the security calendar, not a one-time audit.

7.4 Audit Trails and Explainability Tools

You can’t investigate what you can’t trace. Every recommendation system should maintain detailed logs of which data influenced which model version, when retraining occurred, and what outputs changed as a result. This audit trail is essential for forensic analysis when a poisoning attack is suspected.

Explainability tools — which show why the model made a specific recommendation — help determine whether a recommendation is based on legitimate or suspicious signals. If the model is recommending an item primarily because of a surge of identical five-star reviews from brand-new accounts, that should be visible.

7.5 Human-in-the-Loop Oversight

Automation alone isn’t enough. High-stakes recommendation categories — healthcare, financial advice, breaking news — should have human reviewers in the loop, especially when outputs fall outside expected parameters. A human can catch contextual problems that statistical models miss entirely.

Human review doesn’t mean reviewing every recommendation. It means designing escalation paths so that flagged, anomalous, or high-stakes outputs get human eyes before they reach users. This layer of oversight is both a practical safety net and a trust-building signal for users and regulators.

8. Defense and Mitigation Strategies

8.1 Robust Data Pipeline Design

Defense starts upstream, in how data enters your system. Every data source — user behavior logs, third-party feeds, review APIs — should be treated as potentially adversarial. This means input validation, source authentication, deduplication, and filtering before data ever touches your model’s training pipeline.

Provenance tracking is particularly valuable: knowing exactly where each data point came from and how it was validated enables quarantining suspicious batches without contaminating the entire dataset. Treat your training data with the same security mindset you’d apply to production code.

8.2 Differential Privacy Techniques

Differential privacy adds carefully calibrated noise to training data or model outputs, making it much harder for attackers to infer which specific data points the model learned from—and to craft precision attacks that exploit them. It’s a mathematical guarantee of privacy, not just a policy commitment.

The trade-off is sa light degradation in recommendation accuracy, which is acceptable in most contexts where security and privacy are priorities. For sensitive domains like healthcare or finance, that trade-off is clearly worthwhile. Implementing differential privacy requires technical expertise but is increasingly supported by open-source frameworks.

8.3 Diversity-Aware Algorithms

Recommendation algorithms that actively enforce diversity in outputs are more resilient to poisoning. If the model is designed to surface a mix of items rather than converging on a narrow set, it’s harder for an attacker to dominate the recommendation space — their manipulated content competes with a broader pool.

Diversity also improves user experience independently of security benefits. Users who see varied recommendations are less likely to experience filter bubbles and more likely to discover genuinely relevant content. Security-driven diversity and user-experience-driven diversity are aligned goals, not trade-offs.

8.4 Rate Limiting and Identity Verification

Sybil attacks require scale — lots of fake accounts acting quickly. Rate limiting the velocity of reviews, ratings, and interactions per account, combined with identity verification for high-influence actions, dramatically raises the cost of these attacks. Attackers need more time and more resources to achieve the same effect.

Progressive trust is a powerful design pattern: new accounts have limited influence on the recommendation model until they’ve demonstrated legitimate behavior over time. This means a wave of fresh fake accounts has minimal impact, while established accounts carry appropriate weight — and unusual behavior from them gets flagged.

8.5 Federated Learning Safeguards

Federated learning — where the model trains on data that stays on users’ devices — reduces centralized data exposure but introduces its own poisoning risks. A compromised device can send poisoned gradient updates that subtly shift the global model. Defending against this requires Byzantine-robust aggregation strategies.

Techniques such as Krum, coordinate-wise median, and gradient clipping can detect and discard anomalous updates before they affect the global model. Federated learning security is an active area of research, and any organization deploying it in production should stay current with the latest defensive techniques.

8.6 Regular Model Retraining and Validation

Regular retraining on clean, validated data can flush out the effects of older poisoning attacks. But retraining also introduces risk: if your new training data is itself compromised, you’re accelerating the poisoning rather than reversing it. Validation before retraining is not optional — it’s essential.

Hold-out validation sets, A/B testing of new model versions against behavioral benchmarks, and post-deployment monitoring all help catch problems before they reach users at scale. Treat every model update as a potential vulnerability surface and validate it accordingly before rolling it out broadly.

9. Ethical and Societal Implications

9.1 Manipulation of Public Opinion

When recommendation systems that shape what news people read and what content they see are poisoned, the effects extend far beyond individual platforms. Coordinated manipulation of these systems has been linked to the spread of political disinformation, radicalization, and the erosion of shared factual ground in democratic societies.

The asymmetry of this threat is troubling: a small, well-resourced actor can influence the information diet of millions of people without those people ever knowing their feeds have been manipulated. This makes recommendation poisoning a tool of information warfare, not just a commercial fraud problem.

9.2 Market Distortion and Unfair Competition

When bad actors manipulate recommendation engines to favor their products or suppress competitors, the free market stops functioning fairly. Smaller, legitimate businesses with better products can go unnoticed while inferior goods with manipulated ratings dominate the platform’s recommendations.

This isn’t just a business ethics problem — it’s an antitrust and consumer protection concern. Regulators in the EU, US, and elsewhere are beginning to examine whether platforms bear legal responsibility for recommendation manipulation on their systems, and what disclosure obligations apply when algorithmic outputs are influenced by bad actors.

9.3 Erosion of Trust in AI Systems

Every high-profile recommendation failure — whether it’s a shopping platform pushing counterfeit goods or a news aggregator surfacing conspiracy theories — chips away at public trust in AI-powered systems broadly. Once users learn that recommendations can be bought or manipulated, they discount them even when they’re legitimate.

This erosion of trust has real economic costs. Platforms depend on recommendation engagement for revenue. If users stop trusting the suggestions, click-through rates fall, conversions drop, and the entire value proposition of the recommendation system degrades. Protecting the integrity of recommendations isn’t just a security priority — it’s a business imperative.

9.4 Regulatory and Legal Considerations

The regulatory landscape around recommendation systems is evolving fast. The EU’s Digital Services Act requires large platforms to audit and mitigate risks from their recommendation systems, including manipulation risks. Similar frameworks are emerging in other jurisdictions. Compliance is becoming a legal requirement, not just a best practice.

Organizations that experience recommendation poisoning attacks may also face legal liability — especially if they had reason to know about vulnerabilities and failed to address them. Documentation of detection efforts, incident response procedures, and defensive investments will be critical evidence in any regulatory or litigation context.

10. AI Recommendation Poisoning in the Age of LLMs and Agentic AI

10.1 New Attack Vectors with Large Language Models

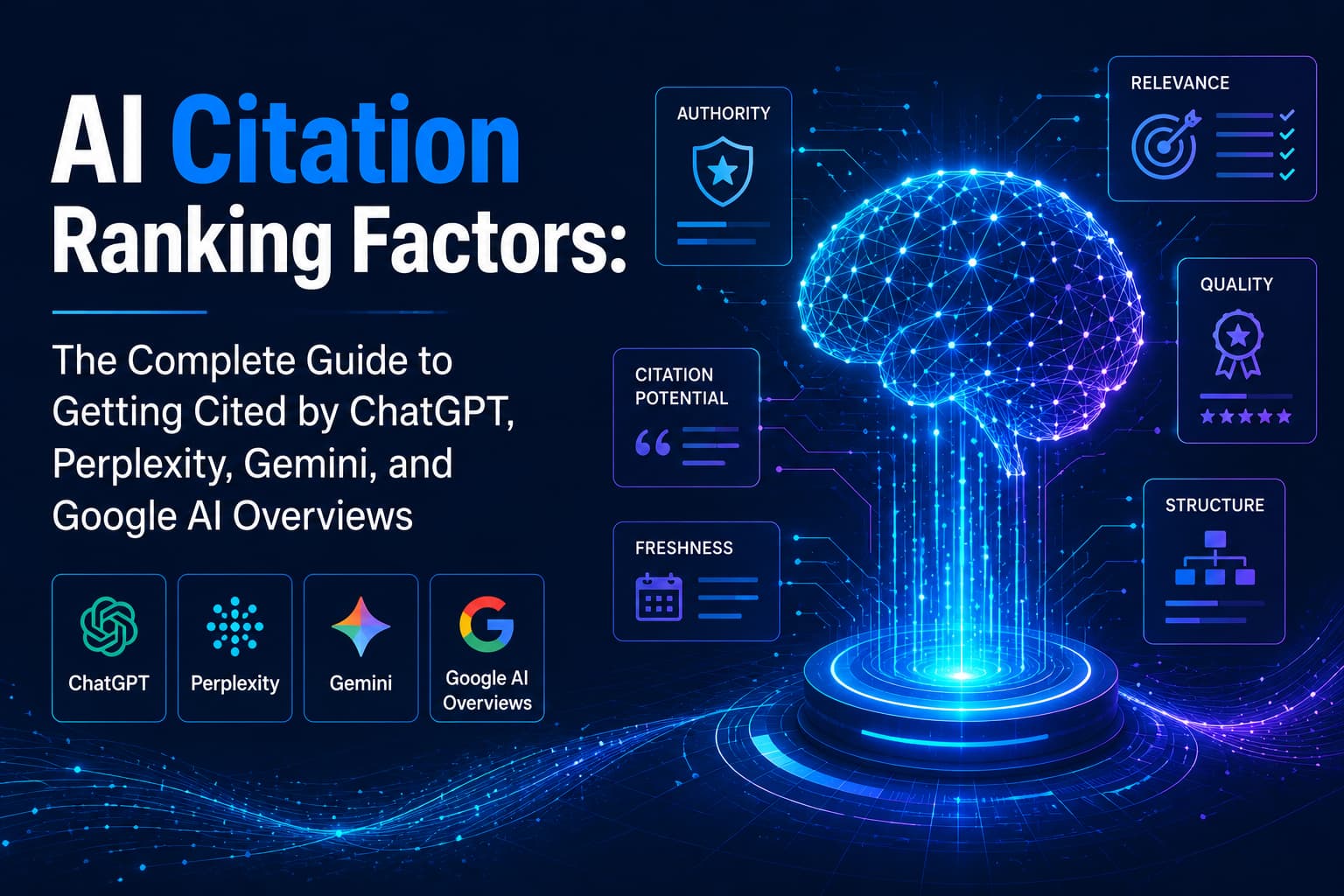

LLM-based recommendation systems introduce entirely new categories of risk. Because they reason about language rather than just behavior signals, they can be manipulated by the content they read — product descriptions, user reviews, news articles — in ways that traditional recommenders cannot.

Indirect prompt injection — where malicious instructions are embedded in third-party content that the LLM processes — is perhaps the most novel and underappreciated attack vector in this space. An attacker doesn’t need access to the system at all; they just need to get their content in front of the model during inference.

10.2 Agentic AI and Autonomous Decision-Making Risks

Agentic AI systems — which take actions autonomously based on recommendations — face compounded risks. A poisoned recommendation that a human user might ignore can be acted on automatically and immediately by an agent. The attack surface expands from influencing human decisions to directly triggering system actions.

As AI agents are deployed to manage financial portfolios, supply chains, and content moderation at scale, the consequences of recommendation poisoning escalate sharply. Defensive architectures for agentic systems must treat every external signal as potentially adversarial and build human confirmation checkpoints into high-stakes decision loops.

10.3 Personalization at Scale: Amplified Vulnerabilities

Highly personalized recommendation systems are more useful — and more vulnerable. The more granular a model’s understanding of each user, the more targeted an attack can be. An attacker who knows how to manipulate a specific user’s recommendation profile can craft attacks designed to influence just that individual, at scale.

This personalized attack surface is especially concerning in contexts like political advertising, healthcare, and financial advice, where nudging a specific individual in a specific direction is the whole point. The combination of extreme personalization and adversarial manipulation creates a new generation of influence operations that are nearly invisible at the population level.

11. Building a Poisoning-Resilient AI Culture

11.1 Organizational Policies and Governance

Technical defenses alone aren’t enough. Organizations need clear policies that govern who can access training data, how model changes are approved, what constitutes a security incident, and how to respond when one is detected. These policies need teeth — documentation, enforcement, and accountability.

A governance committee that includes security, data science, legal, and product stakeholders ensures that recommendation system decisions aren’t made in a silo. Cross-functional oversight identifies blind spots that any single team would miss and establishes organizational ownership of security outcomes throughout the product lifecycle.

11.2 Developer and Data Science Best Practices

Data scientists and ML engineers are the frontline of defense. They need to understand poisoning attack vectors, build defensively by default, treat training data as a potential attack surface, and validate model behavior before deployment. This starts with training and continues with code review standards that include security considerations.

Secure ML development practices should include data lineage tracking, adversarial test sets in model evaluation, anomaly thresholds built into monitoring pipelines, and documented rollback procedures for every model version. Security should be baked into the development workflow — not bolted on after the fact.

11.3 Security Team Integration

AI security is increasingly a specialized field, but it should be integrated with, not separate from, the broader security function. Red teams need ML expertise. ML teams need threat modeling skills. The overlap between classical cybersecurity and adversarial machine learning research is where the most important defensive work occurs.

Organizations should invest in cross-training, bring in specialized AI security expertise for audits and assessments, and stay connected to the academic research community where new attack techniques and defenses are published. The threat landscape evolves fast; so must your security team’s knowledge.

11.4 User Education and Transparency

Users who understand that recommendations are algorithmic — and that those algorithms can be manipulated — make better decisions. Platforms that are transparent about how recommendations work, what signals influence them, and how they protect against manipulation build more durable trust than those that treat it as a black box.

User education also serves a practical defensive function: informed users notice and report suspicious recommendation behavior, creating an additional signal that security teams can act on. Treating your user community as a partner in recommendation integrity — rather than just a passive audience — is both ethical and strategically sound.

12. Frequently Asked Questions (FAQ) about AI Recommendation Poisoning

What is AI recommendation poisoning?

AI recommendation poisoning is a cyberattack in which bad actors manipulate data, feedback signals, or training inputs that power AI recommendation systems, causing them to produce biased, misleading, or harmful outputs. Unlike traditional hacks, it targets what the AI learns, making it harder to detect and reverse.

How do attackers manipulate AI recommendation systems?

Attackers manipulate AI recommendation systems by injecting fake reviews, generating bot-driven engagement, creating large networks of fake accounts (Sybil attacks), or embedding malicious instructions inside content that LLMs process (prompt injection). These tactics corrupt the model’s training data or real-time signals, skewing outputs without ever breaching the system directly.

Which industries are most at risk from recommendation poisoning attacks?

E-commerce, social media, healthcare AI, financial advisory platforms, and news aggregators are most at risk. Any platform that uses behavioral signals to personalise content or rank results is vulnerable. High-stakes domains like healthcare and finance face the greatest consequences, where poisoned recommendations can affect patient outcomes or trigger harmful financial decisions.

How can businesses detect AI recommendation poisoning?

Businesses can detect AI recommendation poisoning by monitoring user behavior for anomalies, tracking sudden shifts in model outputs, running regular red-team exercises, and using explainability tools to trace why specific items are being recommended. Audit trails and human-in-the-loop oversight add critical layers of visibility that automated systems alone cannot provide.

What is the difference between data poisoning and prompt injection in AI systems?

Data poisoning corrupts a model’s training dataset with manipulated inputs before or during training. Prompt injection targets LLM-based systems during inference by embedding malicious instructions within the content the model reads. Both distort AI outputs, but prompt injection is harder to defend against because it exploits the model’s language comprehension.

What are the best defenses against AI recommendation poisoning?

The most effective defenses include robust data pipeline validation, differential privacy techniques, diversity-aware algorithms, rate limiting, identity verification, and regular adversarial testing. Combining technical safeguards with human oversight and cross-functional governance creates a layered defense that significantly raises the cost and complexity of any poisoning attempt.

Is AI recommendation poisoning illegal?

AI recommendation poisoning can violate multiple laws, depending on the context — including computer fraud statutes, consumer protection regulations, and emerging AI governance frameworks such as the EU AI Act. Platforms that knowingly allow manipulation may also face regulatory liability. As AI legislation globally matures, the legal consequences for both attackers and negligent platforms are increasing.

13. Key Takeaways and Recommendations

13.1 Summary of Core Concepts

AI recommendation poisoning is the deliberate manipulation of the signals, data, and feedback loops that power recommendation systems to produce biased or harmful outputs. It’s a growing threat across e-commerce, social media, healthcare, finance, and enterprise AI, and it’s getting more sophisticated as AI itself advances.

The core insight is that recommendation systems are only as trustworthy as the data they learn from. Protecting that data — at the point of collection, through the training pipeline, and into production — is the foundational security challenge. Detection, defense, and governance all flow from this starting point.

13.2 Immediate Action Items for Organizations

Start by auditing your training data pipeline for obvious injection points. Implement anomaly detection on user behavior signals today; even basic statistical monitoring is better than none. Run a red-team exercise against your recommendation system before the end of the quarter. These three steps alone will dramatically improve your security posture.

If you operate in a regulated domain — healthcare, finance, media — review your compliance obligations under emerging AI and platform governance frameworks. The regulatory window for proactive compliance is closing. Organizations that act now will be far better positioned than those who wait for enforcement.

13.3 Long-Term Strategic Recommendations

Build AI security into your product development lifecycle from the start, not as a retrofit. Invest in cross-functional expertise that bridges ML and cybersecurity. Stay current with adversarial ML research — the academic community is often six to twelve months ahead of the threat landscape in production systems.

Think of recommendation integrity as a trust asset, not just a security problem. Platforms that consistently deliver unmanipulated, high-quality recommendations earn user loyalty that translates directly into business value. The long-term return on a recommendation security investment is not just about avoiding harm — it’s about building a competitive advantage through trustworthiness.

14. Glossary of Key Terms

Adversarial Input: A carefully crafted input designed to mislead or confuse an AI model while appearing normal to human observers.

Byzantine Robustness: The ability of a distributed learning system to tolerate malicious or corrupted participants without degrading overall model quality.

Collaborative Filtering: A recommendation approach based on the behavior patterns of similar users rather than the content of items themselves.

Data Poisoning: The injection of corrupted, misleading, or adversarially crafted data into a model’s training dataset.

Differential Privacy: A mathematical framework that adds calibrated noise to data or outputs to protect individual privacy and resist model inversion attacks.

Feedback Loop: The cyclical process where a recommendation system’s outputs generate user signals that feed back into retraining the model.

Federated Learning: A training approach where the model learns from data that stays on user devices, reducing centralized data exposure.

Prompt Injection: An attack on LLM-based systems where malicious instructions are embedded in content the model reads, causing it to follow those instructions.

Red-Teaming: The practice of deliberately attacking your own system to identify and address vulnerabilities before real attackers can exploit them.

Sybil Attack: A manipulation strategy using a large number of fake identities to overwhelm a system’s trust mechanisms and skew its behavior.

15. Further Reading and Resources

Biggio, B., & Roli, F. (2018). Wild patterns: Ten years after the rise of adversarial machine learning. Pattern Recognition, 84, 317-331. A foundational academic overview of adversarial attacks on machine learning systems.

Shafahi, A., et al. (2018). Poison frogs! Targeted clean-label poisoning attacks on neural networks. NeurIPS 2018. Landmark research demonstrating clean-label poisoning — attacks that use correctly labeled data to corrupt model behavior.

Goldblum, M., et al. (2022). Dataset Security for Machine Learning: Data Poisoning, Backdoor Attacks, and Defenses. IEEE Transactions on Pattern Analysis and Machine Intelligence. A comprehensive survey of dataset-level threats to machine learning.

OWASP Top 10 for Machine Learning Security: A practical, practitioner-oriented framework for understanding and addressing the most common ML security risks. Available at owasp.org.

NIST AI Risk Management Framework (AI RMF 1.0): The US government’s framework for managing risks across the AI lifecycle, including adversarial threats to AI systems. Available at nist.gov.

The EU AI Act: Europe’s comprehensive regulatory framework for AI, including provisions related to systemic risk and recommendation system transparency. Full text available at eur-lex.europa.eu.

Adversarial ML Threat Matrix (Microsoft and MITRE): A structured framework for cataloging and reasoning about adversarial threats to machine learning systems. Available at atlas.mitre.org.

2

Leave a Comment